BIM AND NATHERS HEADACHES

Given that my blog doesn't get updated much and there is no other obvious output, lots of people ask me what exactly I am working on at the moment and why it seems to be taking forever. Usually I just mutter the odd platitude, but today I thought I would combine a bit of a rant with an actual example of the kind of problems I am currently and seemingly constantly wrestling with. This one involves the ideal of determining NatHERS compliance directly from a fully integrated building information model (BIM).

Overview

The core idea of BIM is to maintain a central repository of information that models as closely as practical the built form of the building being designed, and to allow the different disciplines involved to directly access and update those bits of information that they need or are responsible for. The design team and consultants work together to embed within the model everything required to appraise, analyse and approve the design as well as cost, commission and construct the final building.

I have been working on some Revit plugins that extract the kind of data required for viable thermal and energy analysis models. The obvious extension of that work is to then automatically calculate the information required to demonstrate compliance with various building energy regulations in different countries. I’m looking mainly at ASHRAE 90.1 in the US, NCM in the UK, Th-BCE 2012 in France, EnEV in Germany, CALENER and CERMA in Spain, RCCTE in Portugal and, being the subject of this post, NatHERS in Australia.

These regulations have huge variation in the kinds of information required and the mechanisms by which compliance can be demonstrated. Some are prescriptive with deemed-to-comply conditions and recommended details. Some permit a qualified engineer to use their own expertise to model and certify a project. Quite a few others, like NatHERS, specify a mandatory calculation engine that must be used. Bringing all these together into a manageable BIM-based workflow is an immensely interesting challenge and one that would be fantastic to solve.

The Problem

Thus we come to the real subject of this rant. Too many mandatory calculation engines required for regulatory approval seem tailored to and focussed on manual data input rather than automated data extraction. By that I mean that you must use some form of custom user interface to manually build the analysis model - redrawing floor plans, typing in data fields and selecting from quite limited or prescribed sets of options - in order to perform any analysis or submit for approval.

This creates two pretty critical problems from my perspective.

1. No Integrated Workflow

This means that there is almost no scope to directly use any of the information you already have in the BIM database without having to manually transcribe it via the custom user interface. You may be able to load in a bitmap or PDF plan that you can trace over, but you certainly won’t be able to load an IFC or gbXML file directly. The tool itself will also typically use some unreadable proprietary data format to store and reload its input data, and often its output as well, for which there will be little or no documentation. There will certainly be no externally accessible API and no way to batch test multiple options or set up automated sensitivity analysis.

Thus the potential for any integrated workflow with your BIM repository is virtually non-existent.

A related issue is that most such tools specifically state that they should only be used for final certification and not ongoing design analysis. However we all know that code compliance is uppermost in every designers mind and the last thing anyone needs is something that can only be used at the very end of a design process that will very likely result in some last minute changes. We really need to find out all of the potential compliance issues much closer to the start of the design process than at the end in order to properly investigate a wide range of integrated and potentially disruptive solutions, instead of just simple cosmetic changes selected in haste just to get the project over the line.

The implicit recommendation seems to be to use other tools for design development (something like EnergyPlus, DOE2, IES, DesignBuilder, etc) and then use NatHERS at the end for certification. Some designers will do that. However, the space load and occupancy conditions that will be applied by the NatHERS engine are not readily available, so any analysis performed using these other tools is unlikely to match what NatHERS will calculate. This uncertainty often dampens all enthusiasm for any form of modelling that isn’t directly compliance-based.

Thus we have a situation where governments around the world are actively promoting BIM workflows, and in some cases even requiring them for government-related building projects. Yet at the same time many are mandating the use energy calculation engines that not only do not integrate within a BIM workflow, but any potential use in that way is actively discouraged.

2. Diverging Domain Models

In my experience, the more an analysis engine relies on manual data input, the more its input requirements are likely to diverge from those representing the actual building being modelled. This typically means things like requiring some simplification of geometry, ignoring or merging adjacent spaces or being able to select from only a very limited palette of conditions or construction materials. This is usually done to make the input task ’easier’, but also very often masks underlying limitations in the engine’s ability to accurately model real building situations.

To explain this better, it is probably best to use an illustrative example.

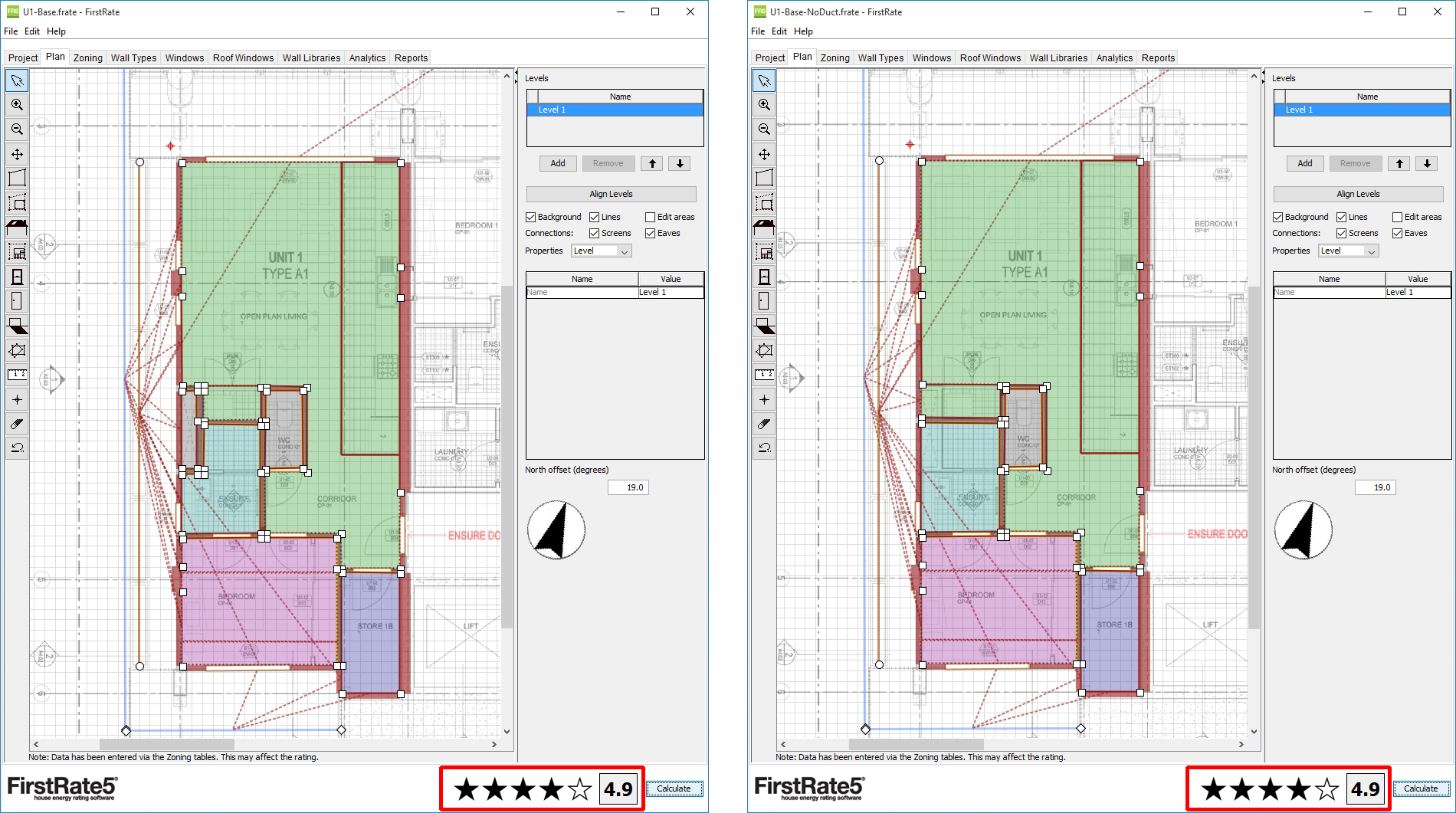

Below are two energy models of the same apartment. The one on the left shows what would be extracted directly from a Revit model, and the one on the right is how a NatHERS accredited assessor would likely model the same apartment according on the latest technical notes . I have modelled the examples here in FirstRate5 because it uses the mandatory CHEENATH engine from CSIRO (as required by NatHERS), and it is freely available so anyone can download the example files and verify what I’m saying.

All looks pretty good thus far. Both models achieve exactly the same rating of 4.9 stars, which is a bit below compliance as they are on the floor immediately above a carpark so have an exposed floor slab. Thus they both need some work to make them more efficient and therefore compliant.

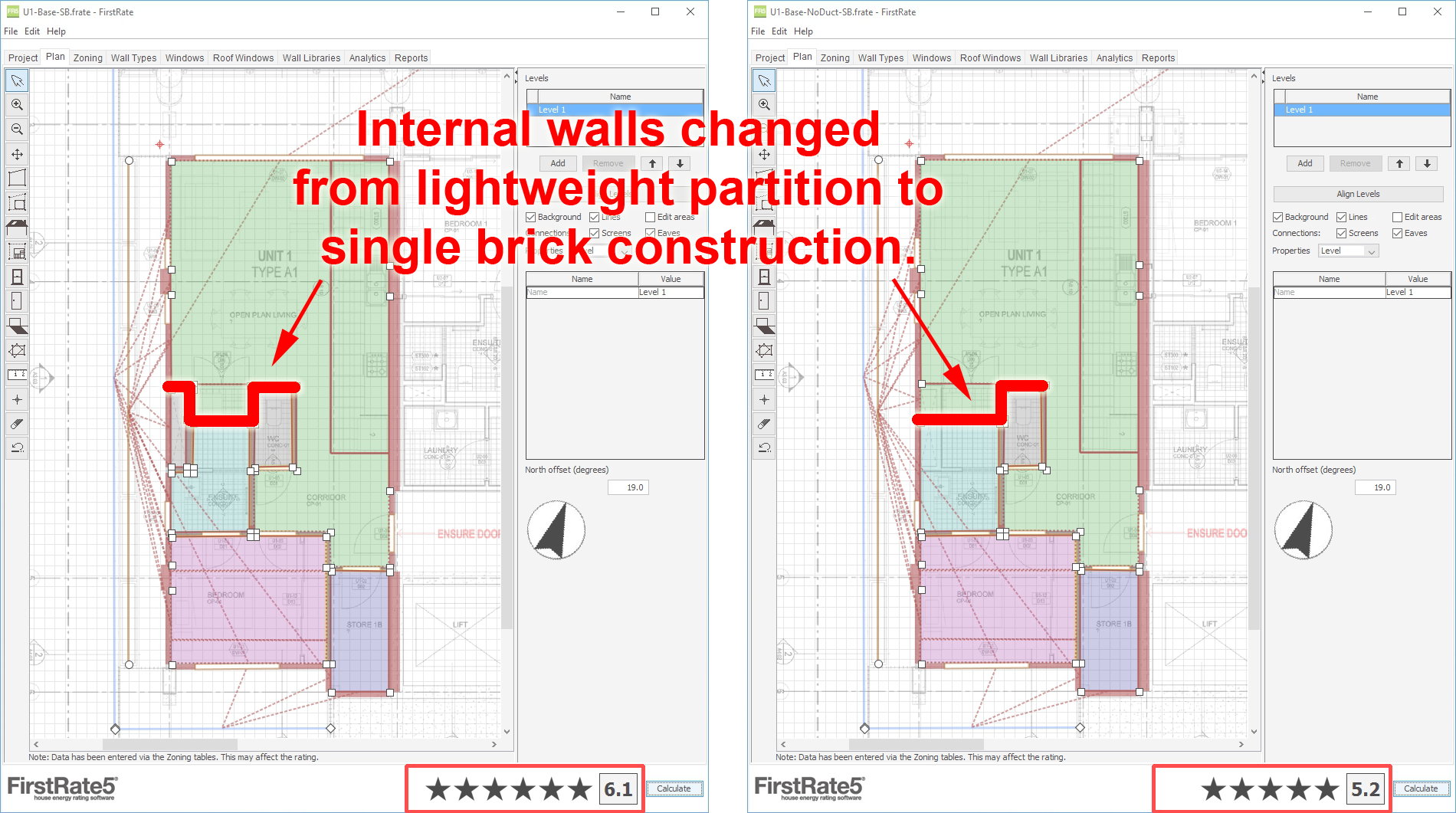

As it turns out, I can get the model on the left side up to 6.1 stars pretty easily by simply changing a small section of internal wall from lightweight partition to single-brick construction, as indicated in the image below. Changing the same section of wall in the model on the right shows nowhere near the same effect.

If you would like to verify that this is the only change to the models, I have made all the models used above available to download as a zip file . You can obtain a free copy of FirstRate5 via https://www.fr5.com.au/ .

Those of you with some knowledge of NatHERS will instantly spot that Section 7.6.1 of Technical Note 1.2 clearly requires you to incorporate small air spaces such as ducts into any zones that they are attached or adjacent to.

7.6.1 Small air spaces such as small pantries, built in robes, plumbing voids, wall voids, return air ducts and other small non-habitable areas are included in the zone that they are attached to or located in. A small pantry is one that cannot be walked into.

The core problem here is that internal temperatures within the duct in the left model are getting ridiculously high in Summer, so adding thermal mass to the intervening wall is enough to dampen them significantly as the enclosed air mass is so small. This doesn’t fully explain the disproportionate increase in star rating due to the other two segments of changed wall, especially as adding similar internal mass to the ductless model on the right shows no such effect.

Also, it is not immediately obvious why the duct space is apparently so different to those around it, other than being quite a bit smaller. There is some limited and not particularly applicable information on the NatHERS website and I presume that if I did an exhaustive search I could probably find information on what space conditions and load profiles are being applied published somewhere in a research paper at some point. However, given the tools readily at hand, it could be assuming a well attended mid-summer-day disco within that particular duct for all I know.

Thus, given the requirement to merge small air spaces within the technical notes, it looks like this is a bit of a software issue - due either to how the CHEENATH engine handles small spaces or how NatHERS assigns conditions and load profiles to them.

To be very clear, I am certainly not attacking CHEENATH or the NatHERS approach. All software has the occasion idiosyncrasy and not all complex phenomenon can be perfectly modelled. However, the ramifications for me of issues like this are particularly important.

Ramifications

When the National Calculation Method (NCM) was first introduced in the UK, I did buy into the idea that it probably didn’t matter if the SBEM calculation engine was initially a little flawed. What mattered was that the industry got used to using it and that, over time, those flaws would be slowly ironed out and better buildings would result. However, once established, it seems to be much more difficult than previously imagined to change the calculation engine in any substantive way. There are far too many entrenched interests and it would appear that some flaws are simply too difficult to deal with.

So too it seems with NatHERS. Rather than deal with the small air space issue within the calculation engine itself, the solution seems to be to change the input model to work around it. This means that the layout required for compliance begins to diverge substantively from the layout of the actual building model being created by the design team in the BIM repository.

So what does a software developer do? Do you require an additional layer of information within the BIM repository with a secondary plan that doesn’t include ducts, or do you devise a method for automatically merging ducts with adjacent spaces? The automatic merge option is probably the best, but also the most work and a significant source of uncertainty. You will never know if your algorithm works for all cases. Sure you will test and solve all that you can think of, but there will always be the odd edge case for which it won’t work properly. Also, different software developers will devise different algorithms, so the same building in two different tools could potentially generate two different results - exactly the thing that most mandatory calculation engines were intended to prevent.

This is the real crux of the problem. I am fully aware that analytical models often have to be specially crafted and will differ from the physical model. However, workarounds for issues like this are relatively trivial to accommodate if someone is manually creating the analysis model from scratch in a tool like FirstRate5 or any of the other accredited software. Thus the temptation is to always deal with such issues through technical notes and massaging the input model. However, if you are trying to extract your input model directly from BIM, your floor plans will include that duct.

The Solution

Basically there is no immediate solution. However there are some relatively simple things we can do to navigate out of the current situation over time.

First we need to move towards the use of open and readable file formats based on well documented data schemas to promote free data exchange with these tools. If the data schemas are available, creative people will write converters for all sorts of different purposes and software developers will find ways to solve the various pain points associated with their use. This is an easy thing to say, but even if they don’t, shouldn’t the process of mandatory compliance be as open and transparent as possible, with the inputs more readily accessible to design audit processes and even document versioning systems as required for QA (ISO9001) and EMS (ISO14001).

Second we need sufficient confidence in the capabilities of these mandatory calculation engines in order to promote their use as an integral part of the iterative design process, helping guide and inform design decisions as the project develops. If the engines themselves are not capable, then surely the answer is to either enhance their capabilities or integrate other tools which are. Don’t simply train a group of specialist consultants how to work around their limitations. When created with public money, these tools should really be open for use by all - so target them at the people most likely to benefit and want to use them anyway, building designers and owners. Obviously garbage-in-garbage-out is an issue, so there is still room for specialist consultants in sign off and final certification, but the BIM data extraction process has a role to play here as it can be made pretty smart at detecting and cleaning up a lot of the garbage right from the start.

If the integration of these mandatory compliance tools into a BIM-based workflow can be made as simple as possible, it will hopefully encourage designers to experiment further and objectively test more diverse options whilst they are making the key design decisions that will determine energy performance. This means governments moving away from compliance-only tools driven by a priesthood of specially accredited assessors and towards robust and informative tools that feed back into the processes that designers and engineers already use.

Without this, designers end up knowing less about the thermal performance of their own buildings and become much more reliant on the recommendations of others. It makes energy efficient design much less about your own iterative investigation or exhaustive sensitivity analysis, and more about simply selecting from a set of rectification options provided by your assessor. It would take a very brave assessor indeed to recommend a fundamental redesign of a project, even if it was obviously needed. Whereas that very thing could have been a no-brainer if the designer had realised it for themselves much earlier in the process. As I see it, this is the only way we are actually going to get any real increases in overall building energy performance.